MongoDB AMP Test Generation & Validation

Timeline

Sep '25 - Oct '25

Team

Senior Product Manager, Senior Staff Engineer

TLDR;

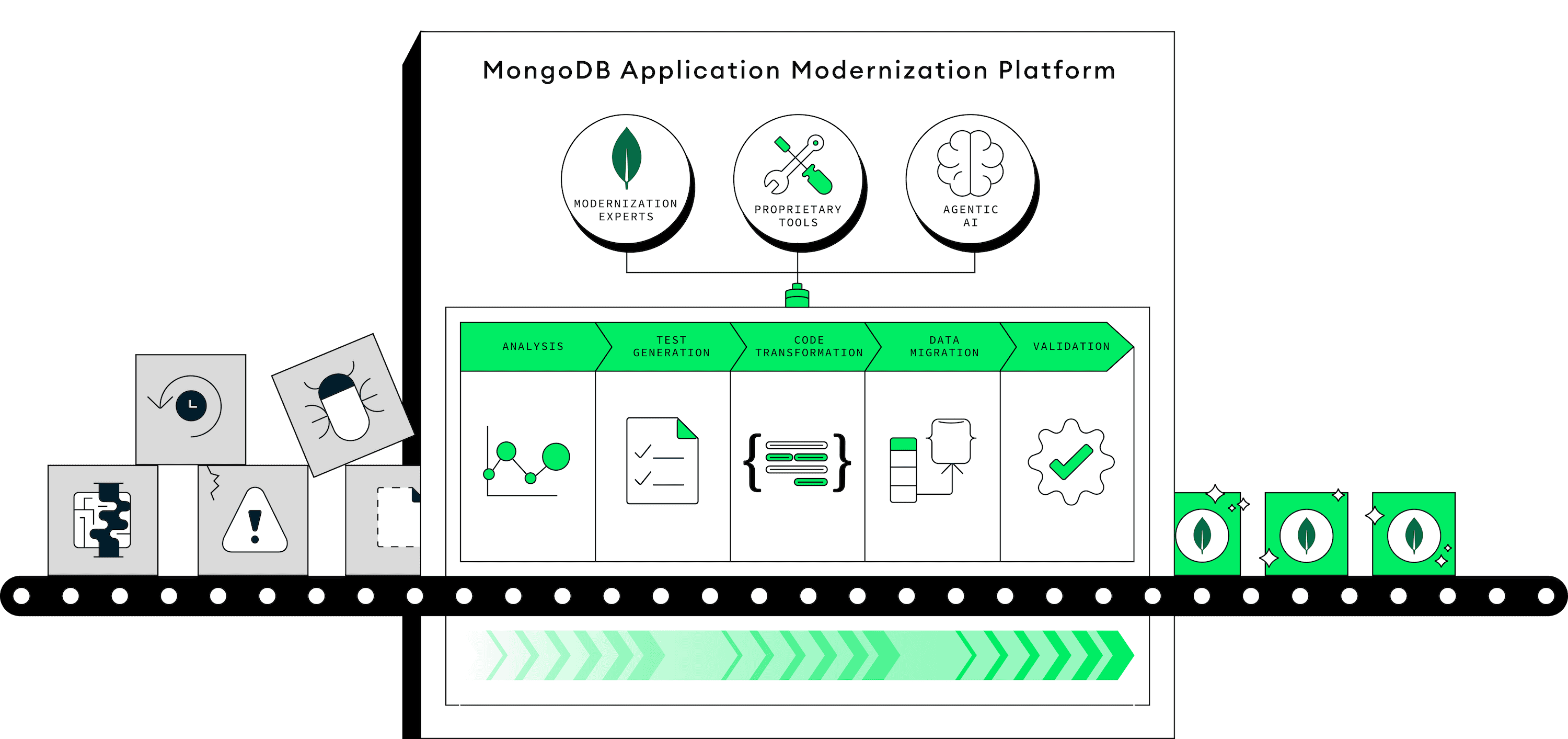

The App Modernization Platform (AMP) is an early-stage B2B consulting and developer tooling initiative helping enterprises convert from relational databases to MongoDB (a non-relational database), while also modernizing their legacy apps at the same time.

I led discovery research to unblock AMP’s testing strategy by defining success criteria and shaping the 12-month roadmap for developer testing tools. Because modernization can’t succeed without validating that transformed code works as expected, this work established standardized testing tools now scaled across consulting teams and integrated with app analysis and AI-driven code transformation workflows.

Modernizing legacy apps is a slow and complex process, thus the AMP team sprouted to help enterprises do it faster and safely with AI developer tools and expert consultancy services

As a UX researcher on AMP, I supported 5+ globally distributed product teams that cover every step of modernization from app analysis and data modeling to data migration, testing, and code generation.

Testing is a core part of modernization, where consultants ensure updated customer code remains functionally equivalent to the original before completing and delivering it to a customer

Participant

"Testing is probably even more important than the actual modernization itself because if you can't verify your stuff, then what are you modernizing?"

I noticed from NPS survey feedback that users were concerned testing was being neglected compared to other areas, because without it, they can’t verify their work and win our customers

At the time, testing was deprioritized… then a small team was recently assigned to it. HOWEVER, they lacked a formal strategy and only had 1 month to define it, so I jumped in to lead discovery

To ensure the strategy was grounded in data and led to meaningful outcomes, I partnered closely with the PM and engineer and set up a weekly working session to align on research and direction.

In our first meeting, we clarified the outcomes we wanted from the study, aligned on how we would collaborate, and discussed key technical constraints that would shape the research.

I remembered that in past interviews, consultants talked a bit about testing

As a starting point, I chugged a bunch of that raw data into NotebookLM and extracted anything related to testing

Using AI helped me quickly get some context around testing on AMP and answer some questions we had. This way I could prepare richer, more informed questions for primary research.

We needed to know exactly how consultants were approaching testing on their projects, so I led contextual interviews with 8 consultants to walk through their testing workflow on a real customer project step-by-step

I recruited consultants who have experience with testing on modernization projects. Since this stage comes later in the project lifecycle, I focused recruiting on the most mature or completed projects.

Observing how consultants approach testing in real customer projects helped us identify the real challenges and workarounds, like what tools they built themselves or tried to use and the constraints they faced. This allowed us to see what works, what doesn’t, and how to scale proven strategies without repeating mistakes.

Through semi-structured, contextual remote sessions, consultants walked us through how they validate source and target systems. Some shared code in their IDE, others used diagrams or planning documents, and some explained their process verbally due to confidentiality.

As consultants walked us through their testing processes, I mapped steps, tools, and pain points live on a Lucid board to enable cross-comparison and give my stakeholders immediate visibility into emerging patterns

Since I collaborated closely with my PM and engineer, they were able to begin their work while I finalized a report that clearly connected insights to specific product opportunities

I consolidated everything into a report with actionable recommendations that the testing team (and stakeholders reviewing their strategies!) could easily reference.

With discovery projects, I sometimes present insights in a deck to discuss and align a whole team, but because this was a fast-moving group of 2 people, I opted to create a scannable doc with actionable recommendations and user evidence. That combined with our weekly check-ins and async collaboration ensured they could move forward.

I shared my insights at the AMP All Hands to present to leadership and the org how I shaped the new testing strategy (+ that I’m someone teams can tap to answer questions about users across products!)

At our monthly All Hands, leadership and 5+ engineering teams get updates on org-wide work. With a broad audience and limited time, I summarized my insights into a couple slides before my PM and engineer dove into the technical designs.

My goal was to show how user insights drove our decisions and make my work visible across product teams.

Impact: My insights directly influenced the 12 month roadmap for the testing team, resulting in 2 standardized testing tools now scaled across AMP to accelerate the delivery of customer projects

with future initiatives coming soon! . ݁₊ ⊹ . ݁ ⟡ ݁